Means of Verification

Image Comparison:

As T-Plan Robot works on the desktop image level, image comparison is one of the key means of verifying behaviour of the system under automation. The idea is that during the script design you save image templates of the system under automation or its components for reference. When the script is executed, the desktop image is compared to the template(s) to verify that it displays the expected result.

Robot supports four image comparison algorithms:

- image search

- object search

- Tesseract OCR

- and the histogram based comparison method.

Image Comparison may be invoked in the scripting language, or Java, through the standard Click, CompareTo, Screenshot and WaitFor commands.

Text Recognition:

T-Plan Robot also provides the option of text recognition through its integration with the Tesseract and ABBYY OCR engines. This allows recognition of text displayed on the connected remote desktop or device which can then optionally be compared against a predefined string.

The engine is exposed in the scripting language as a standard image comparison method called "tocr" and can be employed through the standard Click, CompareTo, Screenshot and WaitFor commands.

The engine is not part of the T-Plan Robot Enterprise product and must be downloaded and installed separately. T-Plan Robot Enterprise then only needs to know its location which can be configured in the OCR panel of the Preferences window.

Object Search:

The object search allows Robot to search for and locate items of a specific colour(s) optionally within predefined size boundaries.

Object Search and Static Image Testing

T-Plan Robot Enterprise v2.2 introduces two new features which target testing of imaging systems such as the GIS (Geographic Information System) and CAD (Computer Aided Engineering) ones.

The first one is Static Image Client which allows automation against images loaded from the file system. The client behaves the same way as a live desktop client such as the RFB/VNC one. Image files may be loaded interactively by the Login dialog or in an automated manner through the "-c" CLI option or by calling the Connect command with an argument in form of "file://<path_to_the_image>". Static image testing may be successfully applied in a number of scenarios:

- Testing of images, such as screen shots, photographs, maps, paintings and outputs of various image producing systems,

- Testing of systems which update an image file (for example a status screen shot). Since the client periodically checks the image file size and time stamp and reloads the image whenever a change is detected, it may be used to test systems which generate output into an image file (or image files).

- Debugging of failed image comparisons from live desktop testing. If you modify your script to save the VNC desktop image to a PNG file whenever image comparison fails unexpectedly, for example using the Screenshot command, you may open the file later on and reapply the image comparison to find out what was wrong. You may eventually easily recreate the image template from the image and verify the new functionality using the standard GUI tools.

Testing of images has a few specific aspects. Unlike live desktops, static images don't consume key events and the Press and Type/Typeline actions are disabled. If the image is truly static meaning that it is not being updated by an outside process, it also doesn't make sense to wait for an update event through the Waitfor command.

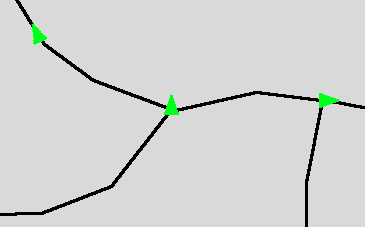

The second feature is Object Search image comparison method. It was designed to locate objects of a particular color or a range of colors. Though this approach is not suitable for classic GUI components, it performs well on low color outputs from imaging systems such as maps, drawings, schemes, etc. The Object Search is typically applied to images loaded through the Static Image Client or to live VNC desktops displaying the target image.

The method accepts an optional template which must contain exactly one object of the specified color. When specified the method filters out the list of objects located to leave just those which are similar to the template image shape up to the ratio specified by the passrate parameter. If the rotations parameter is also specified and is greater than 1, the object list will be matched against the list of template shapes rotated the specified number of times. This allows to build test tasks like "search for all green rectangles which might be in any level of rotation".

The object color is specified by the color parameter. The range of accepted object colors is specified by the toleranceparameter which is similar to the Tolerant Image Search one. It is a number between 0 and 255 which indicates how much the Red, Green and Blue components of a pixel may differ at a maximum to consider the color be equivalent to the object color specified by the color parameter. Be aware that the higher the tolerance value, the higher the probability of false shape detections is. In most scenarios the value should be in the [0, 100] range depending on how much the object is changing, for example as a result of blurring caused by rotation. If the parameter is not specified, it defaults to zero and the algorithm looks for solid color objects only. For a complete specification of algorithm parameters see the Object Search specification.

As an example let's consider a map-like image with black paths and green triangle objects:

Our goal is to find out the number and location of green triangles. This is a perfect task for the static image client combined with the object search algorithm. The script code follows.

# Make the script look for template images in the same dir as the script

Var _TEMPLATE_DIR="{_SCRIPT_DIR}"

# Connect to the map image.

Connect file://{_SCRIPT_DIR}/map.png

# Locate all triangles and highlight them on the screen

Compareto "triangle.png" method="object" passrate="85" rotations="40" color="01FF19" tolerance="100" draw="true"

# Pause the script to allow to review the results.

# Resuming will finish the script and clear up the drawings.

# Should you need to work with the objects further on, their number

# and coordinates are available through variables populated by the "object" method.

Pause

All commands accept the same set of image comparison parameters. T-Plan Robot Enterprise supports dynamic image collections represented by single images or folders. As the script refers to the collection by a simple folder name, this mechanism allows to add, remove and edit template images inside the image folder without having to update the script code. When the script is executed, each collection is searched recursively for Java supported images of lossless format (PNG, BMP, WBMP, GIF) and the specified image comparison action is performed on the generated image list. Image collections may be of course freely combined into the old style semicolon separated file lists with single images or other image collections. See the image collection specification for examples.Commands

Robot also creates image meta data for each newly created or updated template image. The meta data file for example contains the original template image location on the screen which allows the test scripts to test whether the component has moved or not. Meta data also defines the best click point for the eventual "find and click" type of tasks. The click point defaults to the image center and may be customized in the GUI. For more information see the image meta data specification.

The comparison is considered to pass when at least one template from the list produces a match. This allows one graphical object to be represented by multiple images, for example where one script is being executed against various environments (operating systems) or when the verified component is known to changes its state (appearance).

Default location of template images in the file system is defined by the _TEMPLATE_PATHvariable and defaults to the user home folder (configurable in the Preferences->Scripting->Languagewindow). All template images and collections specified in a relative way will be resolved against this directory. To set the folder to a custom location simply set the variable at the beginning of your script using the Var command. A favourite trick is to put images into the same folder as the test script and set the template path to this location as follows. This allows to create a relocatable test suite which is independent from any fixed file paths:Var_TEMPLATE_DIR= "{_SCRIPT_DIR}"

There are CompareTo, Screenshot and WaitFor windows allowing to construct and maintain the commands easily as well as create and edit the template image(s). Images and commands may be also comfortably generated through the Component Capture feature. For full specification of individual parameters refer either to the window help or to the CompareTo command specification.